Michael Beveridge

Plan Fit: Building Decision Support for Medicare Plan Selection

A case study in data science, iterative model design, and AI-assisted development.

My role

I owned discovery, data modeling, and tool design for Spark Plan Fit. This was a personal challenge first and a formal project second: I wanted to dig into CMS data, build my own database, and create working tools that wouldn't have been possible for me a year or two prior. I worked with Claude as an AI development partner (Python, business logic, data validation) and a colleague who brought enrollment data and carrier expertise. My contribution was the end-to-end design of the scoring model, the elimination logic, and the validation approach. I love learning by building!

The Problem

Medicare Advantage is the private-plan alternative to government-run Medicare, covering over 30 million Americans. Each year during open enrollment, beneficiaries choose from an average of 40+ competing plans, each with its own premiums, copays, deductibles, drug coverage, and supplemental benefits.

Most people choose poorly. Research shows that 90% of beneficiaries select a suboptimal plan, overspending an average of $1,260 per year. Across the Medicare Advantage population, that adds up to roughly $42 billion in annual waste. This isn't from fraud or inefficiency, but from people picking the wrong plan off a list.

Even licensed insurance agents, who sell Medicare plans for a living, struggle with this complexity. The best agents produce outcomes roughly twice as good as the worst. The majority fall somewhere in the middle, doing their best with the same overwhelming plan data their clients face.

In 2020, a research team demonstrated that AI-powered decision support for agents could reduce beneficiary overspending by $278 per year and double the rate of optimal plan selection. The finding was clear: give agents better tools, and outcomes improve dramatically. But as of 2025, no one had built and deployed those tools.

9 out of 10 beneficiaries left money on the table and for most of them, it wasn't a small amount.

The Concept: An Elimination Funnel

The typical approach to plan selection is comparison: lay out the options and let people choose. Plan Fit takes the opposite approach. Instead of helping someone choose from 40+ plans, remove the ones that are objectively worse until only a handful remain.

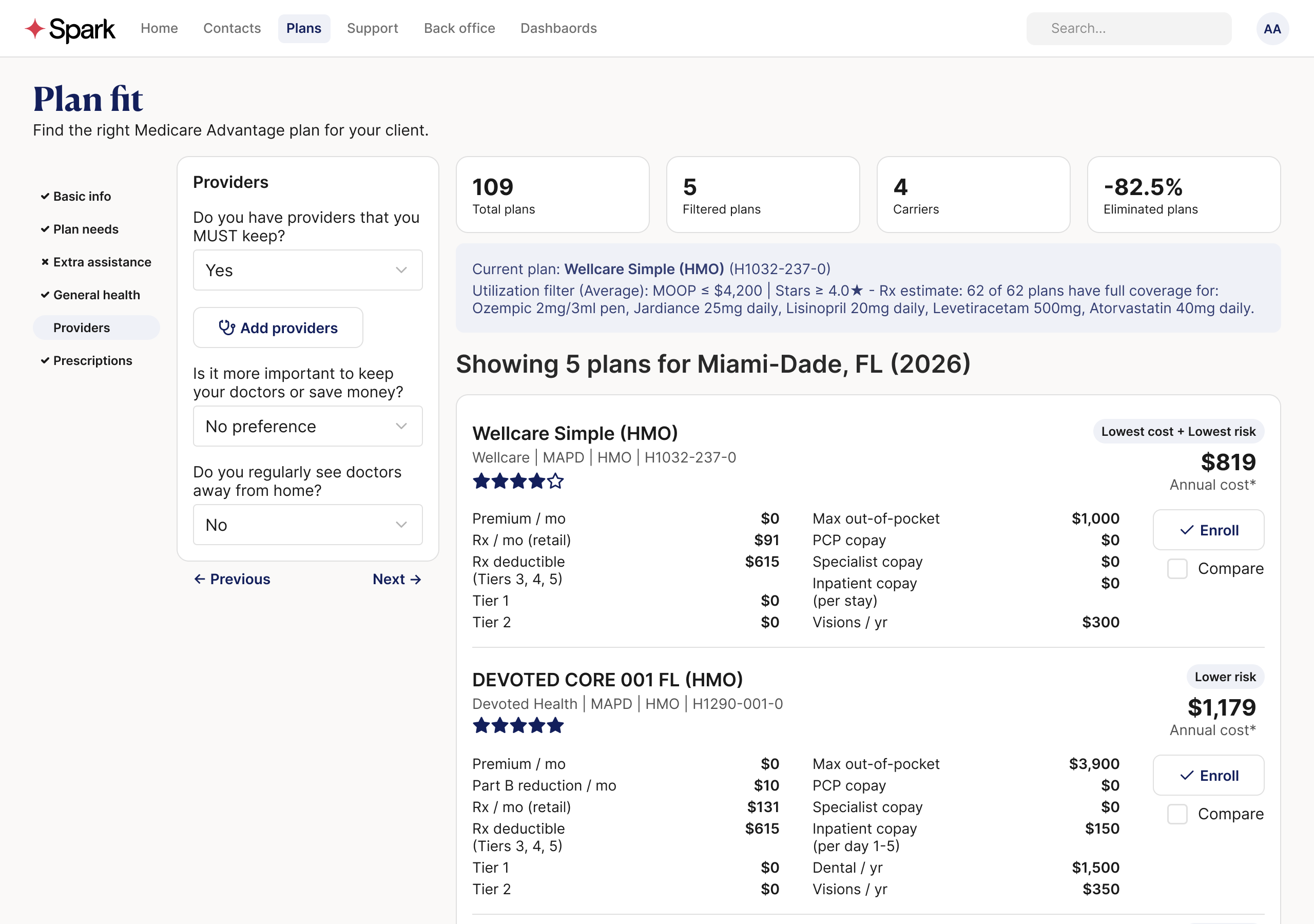

The system works as a funnel. First, eligibility filters narrow the universe to plans available in the beneficiary's county and matching their Medicare status. Next, plans are screened against the beneficiary's actual needs: health conditions, prescriptions, and preferred providers.

The final stage is the most aggressive: Pareto elimination. If Plan A is cheaper and lower-risk than Plan B, then Plan B is dominated. There's no reason to consider it. It turns out that in most markets, the vast majority of plans are dominated. Applying this logic typically eliminates over 80% of options without asking the beneficiary a single preference question.

What's left is a shortlist of 5–7 plans that represent genuine tradeoffs. Only one preference question matters: do you prioritize lower fixed costs or better protection against high medical bills?

Remove plans that are objectively worse until only a handful remain.

Building the Foundation

CMS publishes everything you'd need to compare Medicare Advantage plans: benefits, costs, drug formularies, star ratings, service areas. But it's scattered across dozens of files in inconsistent formats. No single download gives you a usable picture. The first task was turning that raw material into something queryable.

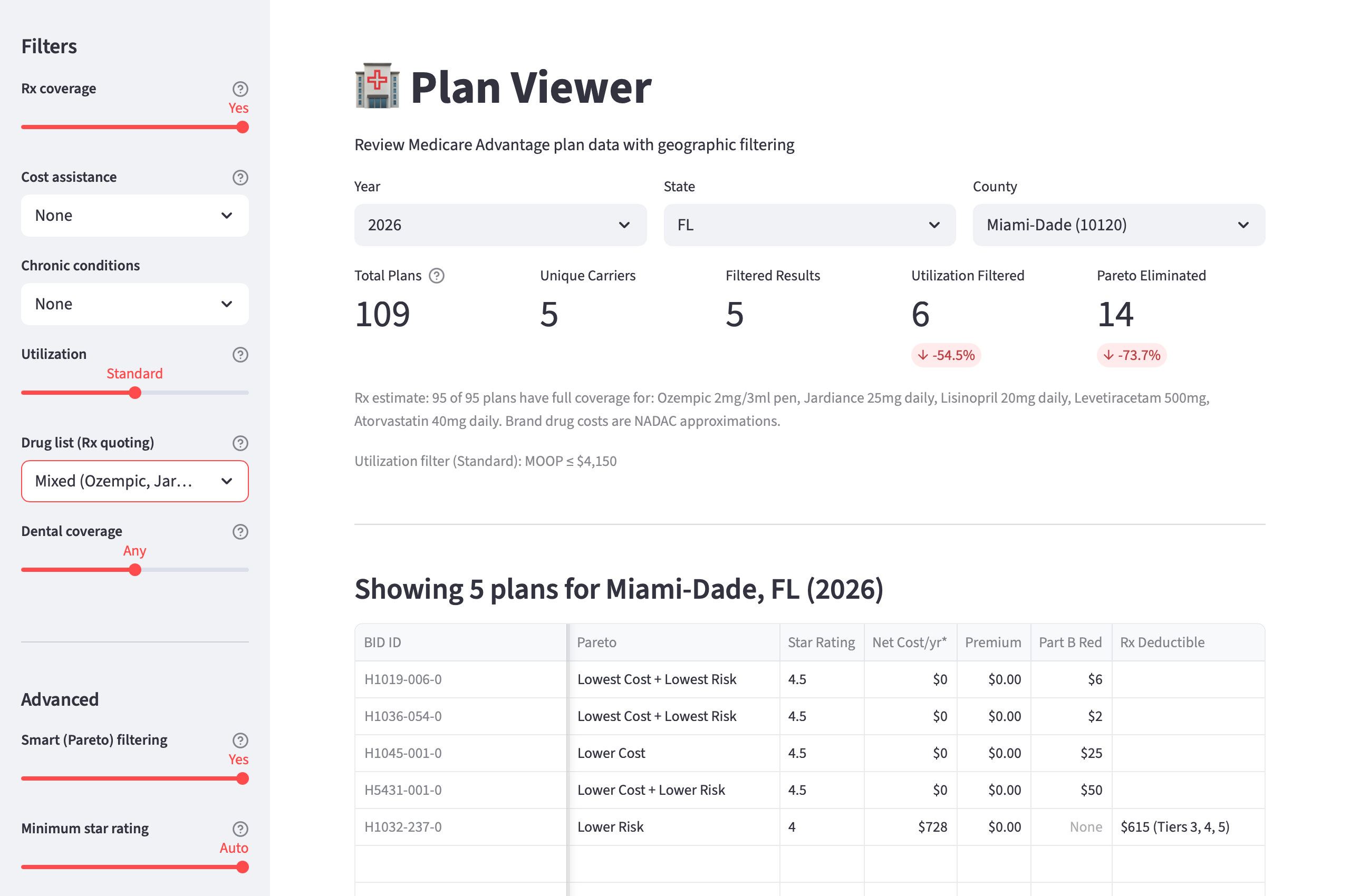

I built a SQLite database consolidating CMS data into a single local store: 8,000+ plan-segments, 2.3 million service area records, star ratings, drug tier structures. On top of that, a Python library for querying, filtering, and scoring plans, and Streamlit applications for interactive exploration and plan comparison.

I used Claude as a development partner throughout - writing the ETL pipeline, building the Python library, working through business logic decisions. This is an important distinction: AI was the right tool for building the system, but the wrong tool for answering the questions the system needs to answer. Plan recommendations need to be deterministic and auditable - grounded in actual plan data, not generated. A generative model can help you write the code that compares 8,000 plans across dozens of dimensions; it can't reliably do the comparison itself. Knowing where that line is shaped every decision in this project.

The First Model

The Pareto concept is straightforward: if Plan A beats Plan B on every dimension you care about, there's no reason to consider Plan B. It's dominated. The challenge is defining the right dimensions.

For the first model, I chose three axes: net cost (what the plan costs you annually), risk exposure (how much you could owe if something goes wrong), and supplemental benefits (dental, vision, hearing, and other extras). The idea was that these three dimensions capture the core tradeoff Medicare Advantage plans are making. CMS gives carriers a fixed revenue pool per member, and carriers allocate it differently across premiums, cost-sharing, and benefits.

I also introduced epsilon thresholds: small tolerance bands on each axis so that plans within a few hundred dollars of each other aren't treated as meaningfully different. Without these, trivial differences create false winners.

Testing across six counties, the results were dramatic: 85+% of plans eliminated without asking the beneficiary anything. In most markets, the vast majority of plans are objectively worse on all three dimensions than a small handful of alternatives. It felt like a breakthrough.

Reality Check

The elimination rates looked great on paper. But a model that removes 80% of plans is only useful if the plans it keeps are actually good. To find out, I backtested Model 1 against 152,000 real enrollments from the most recent Annual Enrollment Period, using two outcome metrics: quality enrollment rate (did the beneficiary stay on the plan?) and rapid disenrollment rate (did they leave within the first few months?).

The results were sobering. Plans the model recommended because they were the lowest cost had a 59.6% quality rate and 13.7% rapid disenrollment. Roughly 1 in 7 beneficiaries left those plans almost immediately. Plans recommended for lowest risk, by contrast, had a 90.5% quality rate and just 3.9% disenrollment. Cost optimization wasn't just imprecise. It was actively harmful, producing outcomes three times worse than risk optimization.

Digging into why, the failures traced back to specific axis problems. The cost axis was dominated by $0-premium plans that looked great on paper but had narrow networks and poor member experiences - the cheapest plans were cheap for a reason. The coverage axis (supplemental benefits) was noisy and correlated with cost rather than adding independent signal. And the model had no awareness of plan quality or carrier track record - it treated a plan from a carrier with 90% retention the same as one from a carrier with 63%.

The model wasn't just missing the target. On the cost axis, it was recommending plans that caused the exact problem we set out to solve.

The Iteration

The diagnosis pointed to several possible fixes: recalibrating the cost axis thresholds, adding carrier-aware scoring, flagging certain plan types for specific agent channels, removing the benefits axis entirely. I explored half a dozen approaches before one emerged as clearly superior.

The fix had two parts. First, I dropped the benefits axis from the Pareto model. It was adding noise without improving outcomes. Supplemental benefits are still displayed to agents, but they no longer influence which plans survive elimination. Second, I added a quality gate before the Pareto runs: plans need a CMS star rating of 4.0 or higher to enter the elimination pool. In markets where that threshold is too aggressive, the gate relaxes to 3.0+ so the model doesn't produce dead ends.

This is where the premium bias thread comes back. The original problem (beneficiaries defaulting to the cheapest plan) had survived into the model itself. The cost axis was doing exactly what beneficiaries do: picking the lowest number without accounting for quality. The star gate breaks that pattern. Low-quality plans that won on cost alone are filtered out before Pareto ever sees them. The cost axis still matters, but it's now competing among plans that have already cleared a quality bar.

The results against the same 152,000 enrollments:

| Metric | Model 1 | Model 2 |

|---|---|---|

| Lowest Cost - quality enrollment | 59.6% | 87.4% |

| Lowest Cost - rapid disenrollment | 13.7% | 4.3% |

| Overall recommended quality | ~78% | 86.2% |

| Overall rapid disenrollment | - | 4.6% |

Every recommendation category now exceeds 82% quality. No toxic cost-only plans survive. And the benchmark that matters most: Model 2 matches experienced field agent performance - 86.2% quality and 4.6% disenrollment, compared to 86.7% and 4.7% for the best-performing agent segment.

Additional validation confirmed this wasn't due to differential carrier access: even when limiting analysis to national carriers that both agent types can access, the quality gap persisted (22.4 percentage points), with 83% attributable to plan selection differences within identical carriers. The problem is systematic plan selection patterns, not contracting or market access differences.

External research further validated this focus on plan selection over alternative approaches. Academic studies and industry reports confirmed that plan selection quality has more sustained impact on enrollment outcomes than lead management quality, with plan characteristics predicting long-term satisfaction better than marketing channel or agent type. This supports Plan Fit's thesis that decision support tools addressing plan selection create more value than improvements to lead generation or marketing practices.

We traded theoretical elegance for practical outcomes, and the data confirmed it was the right call.

What's Next

CMS data can tell you what a plan costs, what it covers, and how it's rated. But it can't tell you everything a beneficiary and their agent need to make a confident decision. Two critical data points sit outside the CMS ecosystem.

The first is prescription drug pricing. CMS publishes formulary data (which drugs each plan covers and at what tier) but not what a beneficiary will actually pay at the pharmacy. For that, we integrate with SunFire, an enrollment platform used by many Medicare agents, which provides exact drug cost calculations. A beneficiary enters their drug list, and we show what they'd pay per month on each plan. Importantly, backtesting showed that drug costs work best as a personalized layer on top of the model; baking a uniform drug list into the Pareto axes actually degraded recommendation quality.

The second is provider networks. Whether a beneficiary's doctor is in-network is often the single most important factor in plan satisfaction, but provider directory data isn't part of the CMS plan files. SunFire's provider data will power carrier-level elimination. If your doctor isn't in a carrier's network, none of that carrier's plans should be recommended.

With these integrations underway, the path forward is production deployment: engineering picks up the validated model, agents are trained on the tool, and Plan Fit is integrated into existing workflows. Model 2 is validated against historical data, but the real test comes after launch — when agents use the tool with real beneficiaries making real decisions. This case study will be updated with those results.